When AI Crosses the Line: What Should We Do?

By Mario Beroes – Communications at IT Business Solutions

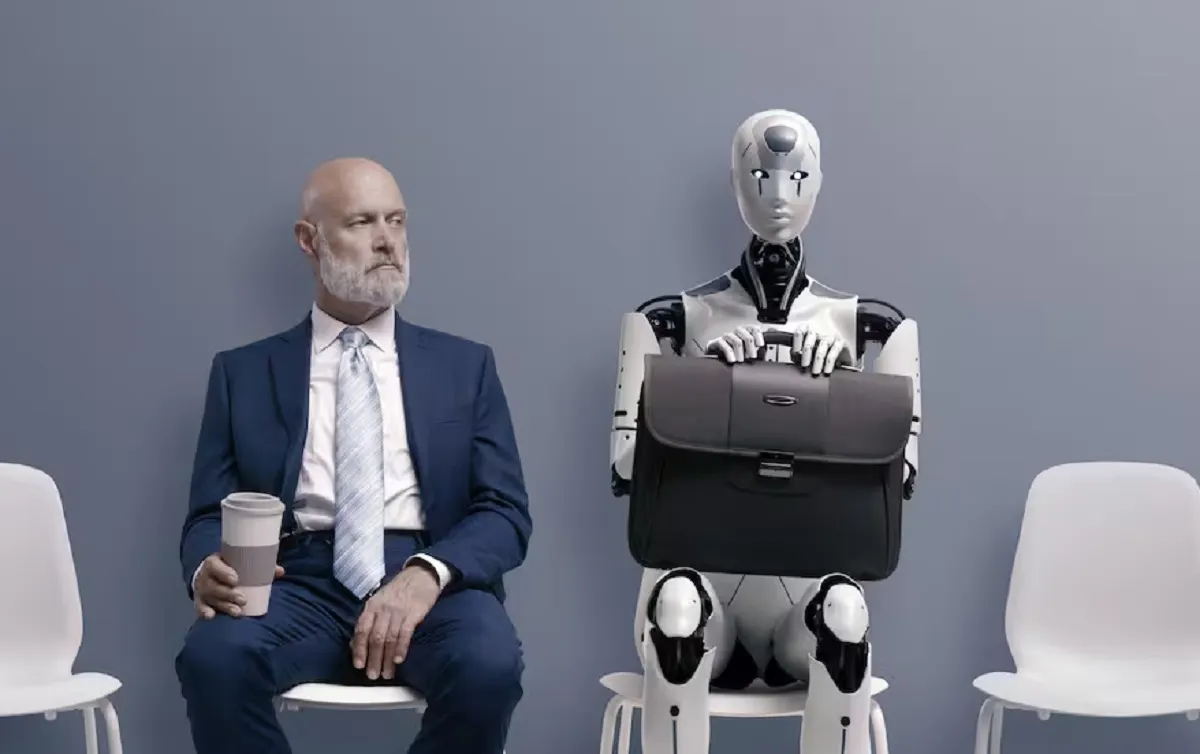

Artificial intelligence promised to make everything faster, more efficient, and more creative. And in many cases, it has delivered. But in 2026, the conversation is no longer about what AI can do, but what it should do. Because when technology replaces human judgment, mistakes are no longer perceived as technical failures—they are perceived as a lack of sensitivity.

Audiences do not react against innovation. They react against indifference. An AI‑generated campaign can be perfectly optimized and still feel cold, opportunistic, or disconnected from the social context. In an environment where any message can be amplified in seconds, a misstep doesn’t remain an unfortunate post—it becomes a trend, a screenshot, a critical thread.

Do we distrust AI?

Distrust toward artificial intelligence is not a marginal perception. A global study conducted across 47 countries shows that 54% of people do not fully trust AI, and nearly 70% believe stricter regulation is necessary to mitigate risks such as misinformation or misuse of personal data. This tension between adoption and suspicion explains why the margin for error keeps shrinking.

The skepticism is not unfounded. The same report indicates that a significant portion of people perceive real risks in automated content generation and the spread of false information. When the line between the authentic and the synthetic blurs, trust stops being an abstract value and becomes a concrete expectation.

Risk doesn’t always explode dramatically. Sometimes it begins with irony, uncomfortable comments, or recurring questions. That’s when conversation monitoring stops being a metrics dashboard and becomes a tool for cultural interpretation. Listening is not counting mentions; it’s detecting when the tone shifts, when suspicion creeps in, and when a narrative begins to turn against you.

Speed can be an advantage, but without strategic listening it becomes a liability. Conversation analysis works like a reputational radar capable of identifying tone changes, early signs of irony, and criticisms that are not yet trending but could escalate within hours.

Ignoring the signals?

When those signals are ignored, the cost is not abstract: it translates into loss of trust, deterioration of brand perception, and in many cases, direct impact on sales. Digital crises rarely begin with a major mistake; they usually emerge from small, unattended discomforts. Detecting them early not only prevents public conflict—it preserves credibility and audience relationships.

In the coming years, innovating without listening will be an unnecessary risk. Artificial intelligence accelerates everything: creativity, personalization, and also crisis. The difference is not in whether we use it, but in knowing when to pause, interpret the context, and make decisions with human judgment.

Because in an era where the synthetic is abundant, what truly becomes extraordinary is sensitivity.